April 18, 2006

Security Gymnastics - Risk-based from RSA, security model rebuilding from MS, and taking revocation to the next level?

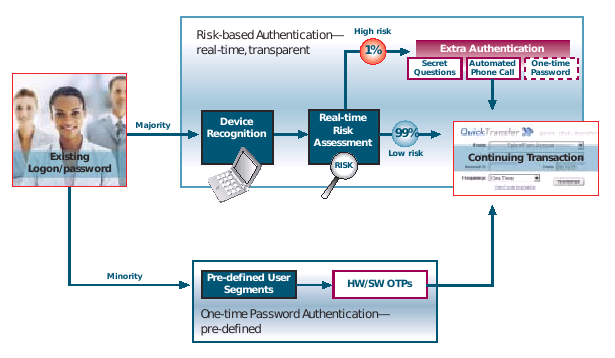

RSASecurity is now pushing a thing called Adaptive Authentication

The risk-based authentication module is a behind-the-scenes technology that is designed to score the level of risk associated with a given activity or transactionlike account logon, bill payment or wire transferin real time. If that activity exceeds a predetermined risk threshold the user is prompted for an additional authentication credential to validate his or her identity. ...One-time password authentication offers tangible, time-honored security for the segments of your user base who routinely engage in sufficiently risky activities or who feel better protected by physical security and will reward your institution for providing this through consolidation of assets increased willingness to transaction online.

It's hard to find a pithy statement of reliability amongst the hypeware, but basically this seems to be offering a choice of authorisation methods, based on the risk. The essence here is four-fold: we are now seeing the penny drop for suppliers: security is proportional to risk. Secondly, note the per-transaction emphasis. RSASecurity are half way to a real solution to the meccano / MITB threat, but given their current line up of product, they are stuck for the time being. Thirdly, this confirms the Cyota trend spotted before. That's smart of them.

Finally, recall the security model that we all now dare not mention (#11). It's war with Eurasia, as always. Further hints of this have been revealed over at emergent chaos, where Adam comments on Infocard:

For me, the useful sentence is that 'Infocard is software that packages up identity assertions, gets them signed by a identity authority and sends them off to a relying party in an XML format. The identity authority can be itself, and the XML is SAML, or an extension thereof, and the XML is signed and encrypted.'

Spot it? As reported earlier, Microsoft is moving to put Infocard in place of any prior security model that might have existed (whatever its unname) and as we now are rebuilding the security model from scratch, it makes sense to ... have the user issue her own credentials as that will build user-base support. Oh-la-la! I wonder if they are going to patent that idea?

Over at the NIST conference on PKI, they had lots of talks on trust (!), revocation (!) and sessions on Digital Signatures (!) and also Browser Security (!). I wish I'd been there. I skimmed through the PPT for the Invited Talk - Enabling Revocation for Billions of Consumers (ppt) by Kelvin Yiu, Microsoft, for any correlations to the above (I found none).

But I did find that IE7 will have revocation enabled by default. What this means in terms of CRL and OCSP checking is unclear but one curious section entitled "Taking Revocation to the Next Level" described (sit down before reading this) a fix in TLS that will enable the web server to cache and deliver the OCSP request for an update pre-fetched to the browser. All in TLS. Apparently, this act of security gymnastics is called "stapling":

Revocation in Windows VistaHow TLS "Stapling" Scales

Contoso returns its certificate chain and the OCSP response in the TLS handshake

Stapling reduces load on the CA to # of servers, not clients

I kid you not. According to Microsoft, revocation is to be bundled in TLS - although I would like people to check those slides because I have a sneaking suspicion that NeoOffice wasn't displaying all the content. Anyone from the TLS side care to correct? Please?

Posted by iang at April 18, 2006 02:46 PM | TrackBackTLS Stapling looks like it will reduce the load on CAs for OCSP, since OCSP responses have to be signed like certificates. This means these responses are expensive to turn around in real time.

Even if the CA does not pregenerate and cache OCSP responses, TLS Stapling will allow the web server to cache the OCSP response - so it does not have to be signed for every single relying party.

Posted by: Morgan Collett at April 18, 2006 07:01 AMwhen we were doing x9.59

http://www.garlic.com/~lynn/subpubkey.html#x959

http://www.garlic.com/~lynn/x959.html#x959

and aads

http://www.garlicl.com/~lynn/x959.html#aads

starting in mid-90s we called it *parameterized risk management* ... sort of extension of some of the transaction fraud scoring ... looking at individual transactions ... amount of the transaction, where the transaction originated, what kind of merchant the transaction was coming from, possibly what kind of purchases ... as well as sequence/aggregation of transactions, patterns, etc. ... types of stuff that you can do in a online paradigm system

(can't do with an offlinestale, static, redundant and superfluous certificate paradigm).

this was then coupled with the overall integrity level of the token, and number of authentication factors involved

(potentially requesting additional authentication factors for higher risk operations). furthermore given the kind of token to integate the integrity level (as opposed to fixed integrity level recorded), the token integrity level could be updated in real time as circumstances changed and evolved (a token last month good for a million dollar transaction might only be good for a five hundred dollar transaction this month ... if some vulnerability in particular tokens had been discovered).

If it was a token that had to be used with terminals (POS terminal, ATM terminal) ... it could also take into account the integrity characteristics of the terminal (as well as changes to integrity of the terminal as technology advances over time) and various other physical location characteristics of the terminal that might increase or decrease occurance of risk/fraud.

a trivial example was somewhat the state-of-the-art with biometrics at the point. biometrics was fuzzy match and the infrastructure chose a fixed biometric scoring threshold ... above the threshold it would be accepted, below the threshold it would be rejected (this is somewhat the source of all the stuff in biometrics for false negatives and false positives). parameterized risk management just took the biometric scoring value and included it with the rest of the calculations. if a particular biometric scoring value was less than dynamic threshold necessary included with all the other calculations ... transaction might be rejected or another biometric value requested. the biometric state-of-the-art of the period would have totally different deployed infrastructure for low-value and high-value payments. parameterized risk management had the same online, realtime infrastructure and real-time parameterized risk management would dynamically adjust the requirements to meet the overall risks of a particular operation.

"dynamic authentication" is essentially just a small subset of parameterized risk management.

the commonly used and well-known example of fraud scoring

from the period was about a credit card being used for a $1 fuel purchase in LA (checking to see if lost/stolen card had been deactivated with no risk to the theif) followed within 20 minutes for the purchase of certain other kinds of goods.

course i've always claimed that parameterized risk management is just another application of Boyd for agile and adaptable operation.

misc. past posts mentioning Boyd

http://www.garlic.com/~lynn/subboyd.html#boyd

misc. references to Boyd from around the web

http://www.garlic.com/~lynn/subboyd.html#boyd2

the other comes out of the dynamic adaptive resource management (aka a kind of dynamic scoring of everything that went on) that I did as undergraduate in the 60s. It was picked up and included in products shipped at the time. In the mid-70s, some of it was dropped in mainframe operating system transition from 360s to 370s. However, I was allowed to re-introduce it within a couple years as the Resource Manager product ... 30th anniversary of the blue letter announcing the Resource Manager comes up on May 11th.

Posted by: Lynn Wheeler at April 18, 2006 11:44 AM