April 12, 2019

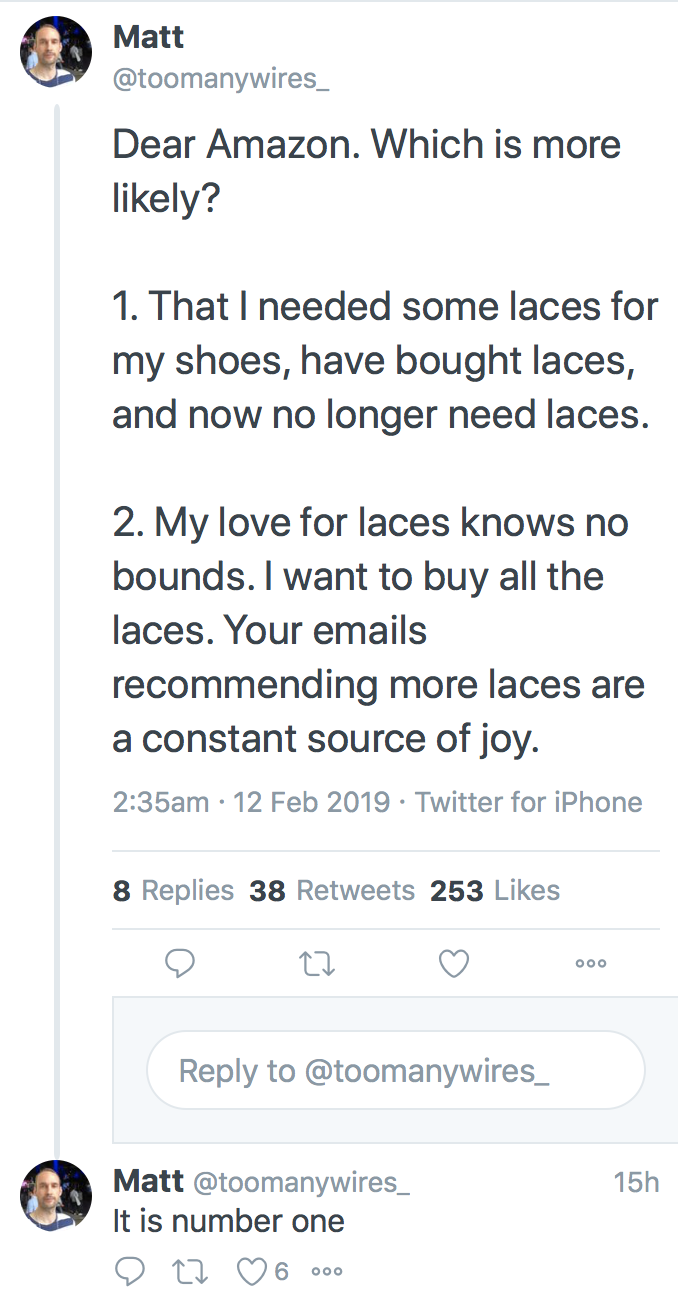

Matrix of 4 Types of Identity

@lochaiching reminds me that I made a 2 x 2 matrix of the 4 competing Types of Identity:

To be read with the earlier piece "4 Types of Identity". This is just a thought experiment to see if there are alignments between the different schools. Thoughts?

February 12, 2019

4 Types of Identity

In the video I did a few years ago, I explored 4 notions of Identity. These have seemed to survive some scrutiny, a little test of time. It appears a bit easier to call them by labels, but words can be too political. NB: now in Chinese: 四种身份认证的类型.

How about numbers? There are four Types of Identity. They are:

Type 1 - your State ID

The state is the one that started the concept of identity as a national system. Reputedly all the way back to Napoléon who invented the passport for young men to show they had done their national service.

Napoléon's design was meant to stop nationals from leaving the country. These days it is the reverse in both meanings - the modern day passport is meant to stop foreigners entering the country.

A definition of Type I would be a document that was issued to a single person to uniquely identify that person and grant a limited number of rights. For a passport, entry to home country. For a driver licence, right to drive on public roads. For those countries with state IDs, these tend to be required for a much larger range of services.

The state's ID goes a bit beyond just the passport. It starts at registration of birth, and a certificate for that event. This makes the birth certificate a 'breeder document', which sounds like an unfortunate choice of words. As many of us have lost our birth certificates, we have to revert to "transcripts" or "copies" when some other document is needed, and so the modern paperchase is begun.

Other than travelling, the primary use case for state ID is to restrict services. Without, you cannot have them. This has the unfortunate side effect of casting a lesser or larger number of people into the zone of exclusion, such that their rights are taken away from them for absence of the appropriate Type I Identity.

Note that ID stands for Identification Document, not for Identity. But the two are commonly and ignorantly commingled, and to use them in the same sentence is a sign that the author is short of full understanding.

Type 2 - I am who I think I am

Normally when we talk about identity, we talk about what's in our heads - our consciousness, our personality, our secret fears and desires.

Psychologists view identity as starting at birth - with the baby as basically an empty vessel. Baby finds Mother, but it is only the appearance of other persons such as Farther that causes baby to question what these persons really are; and by a process of triangulation to realise that baby is someone too.

Baby becomes child becomes adult - through 20 odd years of experiences. Layered, simultaneous, emotional and boring.

The result is the new adult's Identity. Which has nothing to do with the above, the state's "Identity." Which in part explains why the state and others like the corporation have so much difficulty. What they call "my identity" is in complete denial of what I call my Identity, and while they keep doing that, I'm resistant.

Type 3 - Corporate Records

Unlike the state, the corporation has more limits on defining who you are, and has to make do with collecting information from other sources. To make up for this disadvantage corporations have collected more and more data, to the point where they might have challenged and overtaken the state's erstwhile monopoly.

From school to employment to Facebook, the collection of data that each call their copy of your identity has grown. How big? The number of bytes do not matter, what does matter is that no one person in the corporation knows what it all is, nor what it means.

Nor would you if you were given it or asked to control it. But no matter how much we dislike it, we are captured. None of us could turn off our job, resign from Facebook, search for the end of google, decline to be Amazon Premium. As Pam Dixon hints, we're owned by someone, just not sure who:

"The issue of who owns identity is particularly contentious, and we will not delve into that topic here. Suffice it to note that there is much disagreement about who owns identity. Each stakeholder individuals, governments, corporations, and so forth, have a different answer."

It is fair to say that this type of Identity is out of control. It's certainly out of our control. And states have no effective control given the paucity of effect their laws have had, with the exception that proves the rule being the EU's recent GDPR. One might like to think that at least the corporations are in control of the collection, but that is hard to see as there is simply too much of it for one person to understand.

Imagine there was a data controller at Facebook who handled Your Identity. You know, appointed under GDPR. A person you could have a chat with, much like a therapist or a priest or a grandmother. She could delve into the collection of you and answer all your questions, because she's got them, right there. Make you feel at ease. Make you feel like you belong. You're a treasured member of the family.

Not really. People can't do that. People can't delve so deeply into the lives of others and still retain their own humanity. Type 3 Identity is therefore out of control of even the corporations that collect it.

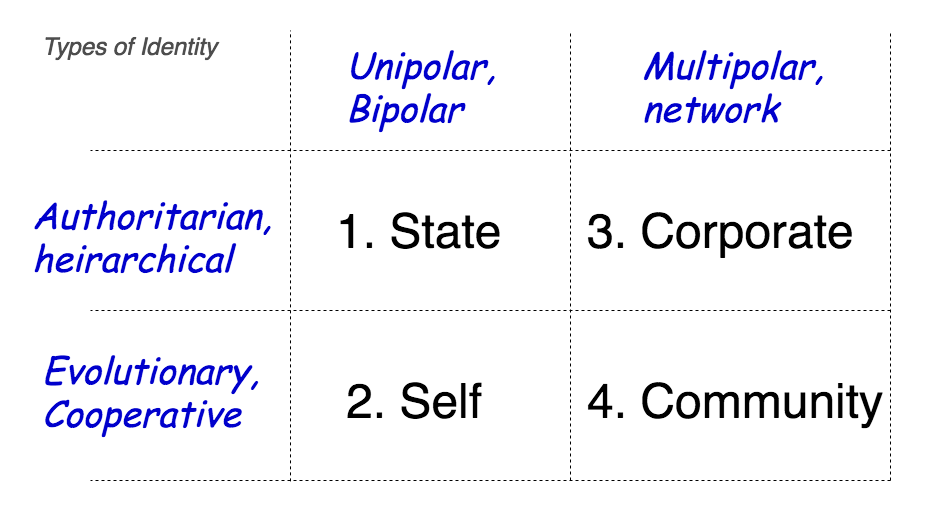

Type 4 - I am who you think I am

Who I think I am started off with me as new born baby discovering there was Mother and Father. This neat destruction of the simple unity of Mother-me-milk-everything triggered the challenge of my lifetime - finding out who I am.

Yet, each discovery of who I am came not from me in isolation but from an interaction with another: parents, siblings, relatives, friends, school-mates, university chums... etc and etc for ever. These people play a part - it isn't just me.

My self isn't just in my head. In some bifurcated sense, my self is in my head and in the heads of everyone I interact with on a frequent basis. My peer group has one consensus on who I am. My family another. My school, yet another. Manyfold, intersectional, overlaid and rather complicated, every defined group of people has "me" within them.

This is like the social graph, but it is more than that. It is the you within you that includes a slice of me.

So what?

The primary point of the taxonomy of you, as it were, is that there are 4 strikingly different views. There is some cross-over but the contrast exceeds it in my opinion. And the clear & present danger is that, due to the very distinct foundations of each form of the word, commingling the Types is a trap.

So much so that when routinely one group talks habitually of one type, a second group is confused by assuming another form. When the state pushes its control over identity (Type 1), people get scared (Type 2). The same contradiction happens when the bank tells you that you've become victim to "identity theft" (Type 3). In some sense, we know that a bungling of identification or records with personality are a fallacious uses of the term, but we remain powerless to object to the deception.

"That's not me!"

When the CAs sell you an identity certificate, everyone ignores it, in part because it's not what it says on the tin. When Whatsapp reports back your identity (Type 4) to you, that it purchased from Amazon without your permission, likewise there is concern.

"Identity" such as it is, is a morass, doomed to failure until we clear up the terms.

The secondary point is that these terms are entirely discordant with someone's expectations. I'll go further - the clash of world views is leading us to one of the great wars of this new century, up there with pensions, financial restructuring and global warming. The silver lining is that if we lose this war for our identity, we won't have to worry about global warming because animals don't care about the weather, that's the farmer's job.

The tertiary point is that Type 4 somewhat survives the devastating critique of modern abuse. What my friend thinks of me is so far unabused territory, albeit with some knocks from the mostly superficial efforts of social media farmers. It's also digitally sane, assuming the concept of reliable statements, which can only be done with real and intentional consent. So it's actually protected from abuse, because Facebook and google and Amazon cannot touch real. But it's also possible to implement in the worst possible ways, taking us back grim visions of 1984 and Stazi-run East Germany.

Nobody's really finished the book on this as yet, but we're working on it. Watch this space.

Editor's note: this post 4 Types of Identity is also now in Chinese: 四种身份认证的类型 thanks to the extraordinary efforts of @lochaiching.

October 21, 2018

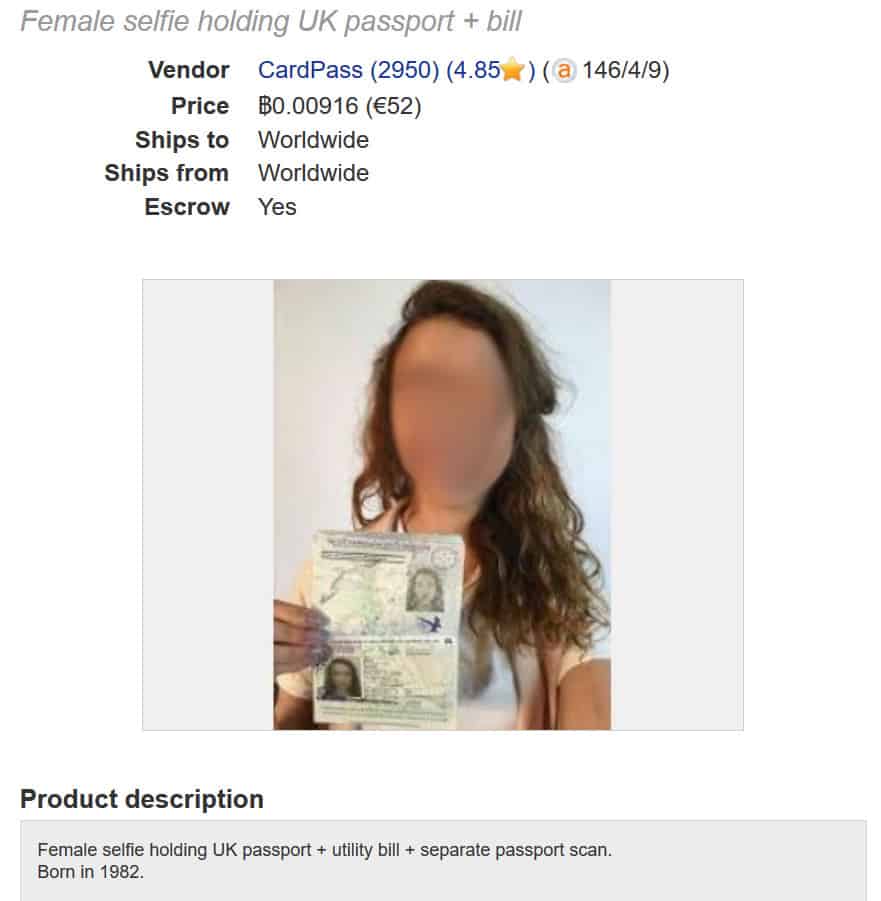

ID Dox - now it's getting personal - Andreas spoofed

Writes Andreas Antonopolous, a noted Bitcoin commentator, that he has been impersonated with a mere scan!

Writes Andreas Antonopolous, a noted Bitcoin commentator, that he has been impersonated with a mere scan!

More than anything else this points at the fallacy of Identity Documents as the God of our Identity. AA may very well be a victim of our penultimate post on cheap-as-chips scans of your identity.

What's becoming clear is that identity is garnering more attention. Unwittingly, orgs and peoples who thought they had this under control are being dragged into the quagmire caused by firstly the Internet, then the upheavals caused by the great financial crisis and the drugs wars, and finally the devil of all devils, blockchain. Where will it end?

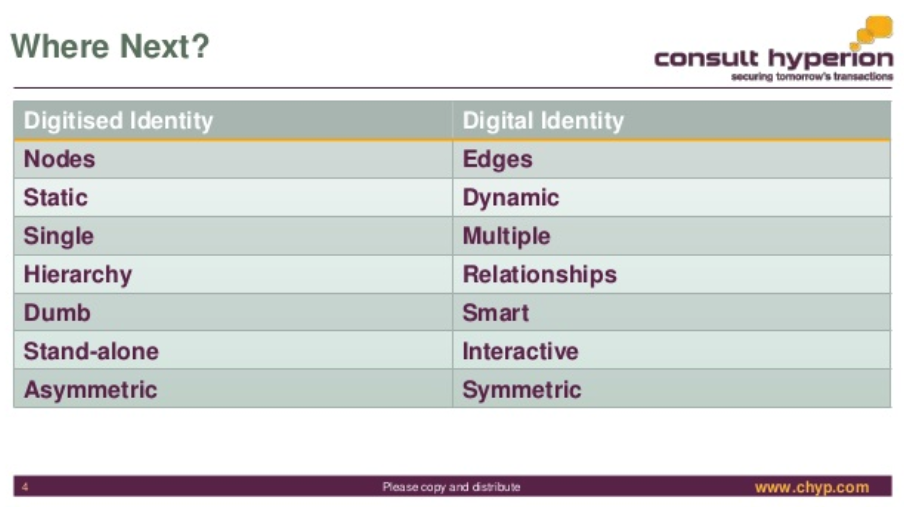

Meanwhile, here comes The Award-Winning David G.W. Birch @dgwbirch another understated twitter persona with slides and solutions for your identity:

The Award-Winning David G.W. Birch @dgwbirch A Short, Strategic Comment on Digital Identity by @chyppings #authentication #authorisation slideshare.net/15Mb/a-short-s <- A short keynote for the Biometrics Congress in London. People liked it and asked for a copy so I've uploaded it to SlideShare.

I post this for debate not for endorsement ;-)

I'd also like to point out that it is unfortunate that <blockchain> is not a HTML entity, because that's what gets typed these days.

February 14, 2018

when we teach everyone to trust ID documents...

From Queanbeyan, Australia:

Police have issued a warning after a Queanbeyan mother said she let two strangers into her home and presented her infant children to them for inspection, after the pair apparently lied about being from Family and Community Services (FACS).

The two people went to the home in Karabar, just outside of Canberra, on Friday afternoon and presented the mother with identification purportedly from the NSW Government department.

The pair, a man and a woman, claimed they were at the home to check on the welfare of her children, despite the family having had no prior interactions with FACS or police.

The mother said her two six-month-old twins were asleep and she could call the pair when they woke up, however the impostors instead said they would wait at the home until the babies were ready.

Soon after, the mother brought the children to the lounge room to meet the strangers, and the pair checked both the babies and their bedroom before leaving the house.

After the visit aroused the woman's suspicions, she contacted Queanbeyan FACS, which confirmed none of its workers were due to visit the woman.

The male visitor was slim, white and in his 30s, about 183cm tall with dark black hair, while the woman with him was 170cm tall and in her 20s.

She had dark hair with a blonde streak in it, and was wearing a distinctive orange blazer at the time of the visit.

Police urge vigilance in checking IDs

Detective Chief Inspector Neil Grey said the incident was "disturbing", and reminded residents of what to look out for on a government ID.

"FACS have confirmed that all caseworkers in the Southern District carry photo ID with their name, job title and FACS logo," he said.

"The ID that was produced was good enough to fool the young mother into letting them into the house.

"Generally speaking workers from the Department of Family and Community services will ring prior to attending the address anyway."

...

January 04, 2018

Hackers selling access to Aadhar

TRIBUNE INVESTIGATION SECURITY BREACH

Rs 500, 10 minutes, and you have access to billion Aadhaar details

Group tapping UIDAI data may have sold access to 1 lakh service providers

Rachna Khaira

Tribune News Service

Jalandhar, January 3

It was only last November that the UIDAI asserted that Aadhaar data is fully safe and secure and there has been no data leak or breach at UIDAI. Today, The Tribune purchased a service being offered by anonymous sellers over WhatsApp that provided unrestricted access to details for any of the more than 1 billion Aadhaar numbers created in India thus far.

It took just Rs 500, paid through Paytm, and 10 minutes in which an agent of the group running the racket created a gateway for this correspondent and gave a login ID and password. Lo and behold, you could enter any Aadhaar number in the portal, and instantly get all particulars that an individual may have submitted to the UIDAI (Unique Identification Authority of India), including name, address, postal code (PIN), photo, phone number and email.

What is more, The Tribune team paid another Rs 300, for which the agent provided software that could facilitate the printing of the Aadhaar card after entering the Aadhaar number of any individual.

When contacted, UIDAI officials in Chandigarh expressed shock over the full data being accessed, and admitted it seemed to be a major national security breach. They immediately took up the matter with the UIDAI technical consultants in Bangaluru.

Sanjay Jindal, Additional Director-General, UIDAI Regional Centre, Chandigarh, accepting that this was a lapse, told The Tribune: Except the Director-General and I, no third person in Punjab should have a login access to our official portal. Anyone else having access is illegal, and is a major national security breach.

...

read more.

June 16, 2017

Identifying as an artist - using artistic tools to generate your photo for your ID

Copied without comment:

Artist says he used a computer-generated photo for his official ID card

A French artist says he fooled the government by using a computer-generated photo for his national ID card.

Raphael Fabre posted on his website and Facebook what he says are the results of his computer modeling skills on an official French national ID card that he applied for back in April.

| Le 7 avril 2017, jai fait une demande de carte didentité à la mairie du 18e. Tous les papiers demandés pour la carte étaient légaux et authentiques, la demande a été acceptée et jai aujourdhui ma nouvelle carte didentité française.

La photo que jai soumise pour cette demande est un modèle 3D réalisé sur ordinateur, à laide de plusieurs logiciels différents et des techniques utilisées pour les effets spéciaux au cinéma et dans lindustrie du jeu vidéo. Cest une image numérique, où le corps est absent, le résultat de procédés artificiels. Limage correspond aux demandes officielles de la carte : elle est ressemblante, elle est récente, et répond à tous les critères de cadrage, lumière, fond et contrastes à observer. Le document validant mon identité le plus officiellement présente donc aujourdhui une image de moi qui est pratiquement virtuelle, une version de jeu vidéo, de fiction. | On April 7, 2017, I made a request for identity card at the city hall of the 18th. All the papers requested for the card were legal and authentic, the request was accepted and I now have my new French Identity Card.

The Photo I submitted for this request is a 3 D model on computer, using several different software and techniques used for special effects in cinema and in the video game industry. This is a digital image, where the body is absent, the result of artificial processes. The image corresponds to the official requests of the card: it is similar, it is recent, and meets all the criteria for framing, light, background and contrast to be observed. The document validating my most official identity is now an image of me that is practically virtual, a video game version, fiction. The Portrait, the photo booth and the request receipt were shown at the r-2 Gallery for the Exposition Agora |

The image is 100 percent artificial, he says, made with special-effects software usually used for films and video games. Even the top of his body and clothes are computer-generated, he wrote (in French) on his website.

But it worked, he says. He followed guidelines for photos and made sure the framing, lighting, and size were up to government standards for an ID. And voila: He now has what he says is a real ID card with a CGI picture of himself.

"Absolutely everything is retouched, modified, and idealized"

He told Mashable France that the project was spurred by his interest in discerning what's artificial and real in the digital age. "What interests me is the relationship that one has to the body and the image ... absolutely everything is retouched, modified, and idealized. How do we see the body and identity today?" he said.

In an email, he said that the French government isn't aware yet of his project that just went up on Facebook earlier this week, but "it is bound to happen."

Before he received the ID, the CGI portrait and his application were on display at an Agora exhibit in Paris through the beginning of May.

Now, if the ID is real, it looks like his art was impressive enough to fool the French government.

March 13, 2017

Robin Hood Talk - Identity - Who am I?

Transcript of talk given in 2015 on the meaning of Identity on video here https://www.youtube.com/watch?v=2ITif1OBVX8. The specific question asked was, where will we be in 5 years, in 2020? I might have captured it for a purpose of my own, which is now published as "My Identity"...

There are multiple schools about Identity. Who am I? There are several schools that will tell you who I am.

The western narrative generally works around the notion that the state will register my name and therefore give me an Identity. This is one thing that states love, and if you look in the United Nations Charter for the Rights of the Child, it says in there that every child has the right to a state granted Identity, which is the most Orwellian thing Ive ever read. It really says that every state has the right to give every child an identification number and control their lives. But the UN being the UN gets away with that sort of stuff.

Alternatively there is the psychological school of thought, which says your Identity is inside yourself. Youve got the super-Ego, the Ego and the Id, is one particular scientific or psuedo-scientific theory, and then theres various other things such as the development of the childs brain, which goes through various phases in which it discovers the notion -- the first thing that it discovers is that theres something called a Mother.

It doesnt know that its called a Mother, but Mother provides for sustenance. When it hurts, it cries. And then the second thing that it discovers is, theres a Father, who happens to be around whenever the Mother is around. This leads to the creation within the brain that says, theres Me, because theres Father and Mother. The world started off with Mother, and then theres Father, which forces the child to recognise Me. This is the beginning of a long journey as the brain learns who I am, which goes on through childhood, teenagership and so forth, which psychologists have mapped out.

Thats a second school of thought. Then theres a third school of thought that says, your Identity is this (holds up laptop) - the computer or smartphone - this is my Identity. On this thing which you all recognise as being a computer, is everything known about me. When I go out into social networks, when I write stuff, Ive got all my secrets in there, all the details of my life, if I lose that, Im screwed. Ive got backups, sure, but itll take me 2 months to get through them, and get them sorted out.

If you like, theres a huge opportunity out there in the world today, where people have recognised that the states are not doing a good job at Identity, and were not doing a good job at our own Identity, so theres an opportunity for corporations to come in and provide Identity.

You ask me where were going to be in 5 years time, the corporations 5 year plan, which gives them 2020 vision, is that they will place your Identity on their servers. You can see this happening with Facebook, and Amazon and Apple and Google, theyre all competing for your Identity.

Thats a third view, and its fair to say were very uncomfortable with that, theyre very comfortable with it, but Im not sure they understand the endgame there.

Then theres a fourth view, and this is to go back to what is Identity. Identity is such that me having a feeling of self is fine, but what was this thing about me seeing Mother, me seeing Father, me understanding there are several people here.

(5 mins BEEP - oh do I get an extension?)

Actually my Identity is not whats inside me, my Identity is whats inside you. Youre all looking at me, youre wondering who the hell this guy is, That is my Identity. In the sense that I can impress you by speaking good stuff, or I cant impress you, Im speaking nonsense, that perception as a group, will carry forward as my Identity into future meetings, into future years, into future blog posts. People will say Iang said this stuff, and I remember, I saw, I heard, so my Identity is in all your heads. Its not in my head at all.

Which brings us to, if you like, the fourth school, or possibility of what happens in the future, and that is, how do we get you as a group, how do I get you as a group, to protect and nurture and be nice to my Identity? And its this, I think, which is the magic of the African invention of the chama, where in a low trust society before we were talking about Google, Apple, Amazon, states, is that a low trust society? I think we can see it that way. Not as bad as Africa, but in a low trust society, what we do is we come together as a group, where we already trust each other, and we work to protect each other, as a group.

So how do we form those groups? Thats the story of Africa, and I think this is going to be their biggest export in the future, how we come together as groups.

Its interesting, if you go back and look at those various stories of Identity, I cant do this by myself because I cant store and analyse all the information. I cant put it into my brain and make it all happen as all these techies talk about it: weve gotta store all this data and analyse it with data mining techniques, bayesian statistics. I cant do it in my brain!

The state cant do it either, because the state can only collect that which it is empowered to collect. It can trick the UN into getting the right to identify me with a number, it can do that. But what richness can it get out of that? Not very much.

Google can store huge amounts of data, it can store all the data, but it can only store what I can give it for free. The same with Apple, Amazon, Facebook. Only that which we can store up there for free, information that is valued at approximately zero, can then be collated to be approximately slightly more valuable.

So we have a conundrum. I cant do it, the state cant do it, Google cant do it. But a group could do it. If a group had the software, that collected information, if I was part of a group and I willingly gave them valuable information about me, myself, and the group protected that information -- because its my group and were all members of the same group -- then I could build a situation where I would be comfortable inside my group. My group would be comfortable with me. And we can work together.

And I think that is the opportunity. In five years time, we will know whether we got the mega-corporations holding my Identity or whether we managed to take it back.

February 27, 2017

Today Im trying to solve my messaging problem...

Financial cryptography is that space between crypto and finance which by nature of its inclusiveness of all economic activities, is pretty close to most of life as we know it. We bring human needs together with the net marketplace in a secure fashion. Its all interconnected, and Im not talking about IP.

Today Im trying to solve my messaging problem. In short, tweak my messaging design to better supports the use case or community I have in mind, from the old client-server days into a p2p world. But to solve this I need to solve the institutional concept of persons, i.e. those who send messages. To solve that I need an identity framework. To solve the identity question, I need to understand how to hold assets, as an asset not held by an identity is not an asset, and an identity without an asset is not an identity. To resolve that, I need an authorising mechanism by which one identity accepts another for asset holding, that which banks would call "onboarding" but it needs to work for people not numbers, and to solve that I need a voting solution. To create a voting solution I need a resolution to the smart contracts problem, which needs consensus over data into facts, and to handle that I need to solve the messaging problem.

Bugger.

A solution cannot therefore be described in objective terms - it is circular, like life, recursive, dependent on itself. Which then leads me to thinking of an evolutionary argument, which, assuming an argument based on a higher power is not really on the table, makes the whole thing rather probabilistic. Hopefully, the solution is more probabilistically likely than human evolution, because I need a solution faster than 100,000 years.

This could take a while. Bugger.

March 10, 2014

How Bitcoin just made a bid to join the mainstream -- the choice of SSL PKI may be strategic rather than tactical

How fast does an alternative payment system take to join the mainstream? With Paypal it was less than a year; when they discovered that the palm pilot users were preferring the website, the strategy switched pretty quickly. With goldmoney it was pretty much instant, with e-gold, they never achieved it.

With Bitcoin's new announcement, we can mark their intent as around four years or so. Belated welcome is perhaps due, if one thinks the mainstream is actually the place to be. Many do, although I have my reservations on this point and it is somewhat of a surprise to read of Bitcoin's choice of merchant authentication mechanism:

Everyone seems to agree - the public key infrastructure, that network of certificate authorities that stands between you and encrypting your website, sucks.Its too expensive. CAs dont do enough for the fees they charge. Its too big. There isnt enough competition. Its compromised by governments. The technology is old and crusty. We should all use PGP instead. The litany of complaints about the PKI is endless.

In recent weeks, the Bitcoin payment protocol (BIP 70) has started to roll out. One of the features present in version 1 is signing of payment requests, and the mechanism chosen was the SSL PKI.

Mike Hearn then goes on to describe why they have chosen the SSL PKI. The description reads like a mix between an advertisement, an attack on the alleged alternates (such as they are) and an apology. Suffice to say, he gets most of the argumentation as approximately right & wrong as 99% of the experts in the field do.

Several things stand out. I read from the article that there was little attempt to explore what might be called the "own alternative." From this I wonder if what is happening is that a conservative inner group are actually trying to push Bitcoin faster into the mainstream?

Choosing to push merchants to SSL PKI authentication would certainly be one way to do it. However, this is a dangerous strategy, and what I didn't see addressed was the vector of control issue. This was a surprise, so I'll bring it out.

A danger with stated approach is that it opens up a clear attack on every merchant. Right now, merchants deal under the radar, or can do so, and caveat emptor widely rules in Bitcoinlandia. Once merchants are certified to trade by the CAs however, there is a vector of identification, and permission. There is evidence. Requirements for incorporation. There are trade records and trade purposes.

And, there is a CA which has ... what?

Terms & conditions. Unfortunately, T&C in the CA industry are little known, widely ignored, and not at all understood. Don't believe me? Ask anyone in the industry for a serious discussion about the legal contracts behind PKI and you will hear more stoney silence than if you'd just proven to the UN that global warming was another malthusian plot to prepare the world for the invasion of Martians. Still don't believe me? Check what CABForum's documents say about them. Stoney silence, in words.

But they are real, they exist, and they are forceful. They are very intended, as even when CAs don't understand them themselves, they mostly end up copying them.

One thing you will find in them is that most CAs will decline to do business with any person or party that does something illegal. Skipping the whys and wherefores, this means that any agency can complain to any CA about a merchant on any basis ("hasn't got a license in my state to do some random thing") and the CA is now in a tricky position. Tricky enough to decide where its profits come from.

Now, we hope that most merchants are honest and legal, and as mentioned above, maybe the strategy is to move in that direction in a more forceful way. The problem is that in the war against Bitcoin, as yet undeclared and still being conducted under diplomatic cover, any claim of illegality will take on a sort of state-credibility, and as we know when the authorities say that a merchant is acting against the law, the party is typically seen to be guilty until proven innocent &/or bankrupt. Factor in that it is pretty easy for an agency to take a line that Bitcoin is illegal per se. Factor in that all commercial CAs are now controlled via CABForum and are all aligned into one homogoneous equivalency (forget talk of competition, pah-lease...). Factor in that one sore thumb isn't worth defending, and sets a precedent. We should now see that all CAs will slowly but surely feel the need to mitigate against the threat to their business that is Bitcoin.

It won't be that way to begin with. One thing that Bitcoiners will be advised to do is to get a CA in a safe and remote country, one with spine. That will last for a while. But the forces will build up. The risk is that one day, the meme will spread, "we're not welcoming that business any more."

In military strategy, they say that the battle is won by the general that imposes his plan over the opponent, and I fear that choosing the SSL PKI may just be the opponent's move of choice, not Bitcoin's move of choice, no matter how attractive it may appear.

But what's the alternative, Mike Hearn asks? His fundamental claim seems to stand: there isn't a clear alternative.

This is true. If you ignore Bitcoin's purpose in life, if you ignore your own capabilities and you ignore your community, then ... I agree! If you ignore CAcert, too, I agree. There is no alternate.

But what would happen if you didn't ignore these things? Bitcoin's community is ideally placed to duplicate the system. We know this because it's been done in the past, and the text book is written. Indeed, long term readers will know that I am to some extent just copying the textbook in my current business, and I can tell you it certainly isn't as hard as getting Bitcoin up and rolling.

Capabilities? Well, actually when it comes to cryptographic protocols and reliable transactions and so forth, Bitcoin would certainly be in the game. I'm not sure why they would be so shy of this, as they are almost certainly better placed in this game than all the other CAs except perhaps the very biggest, and even that's debatable because it's been a long time since the biggest actually had the staff and know-how to do any game-changing. Bitcoin has got the backing of google who almost certainly have more knowledge about this stuff than all the CAs combined, and most of the vendors as well (OK, so Microsoft might give them a run for their money if they could get out of the stables).

They've got the mission, the community, the capabilities and the textbook. Why then not? This is why I think that Bitcoin people have made a strategic decision to join the mainstream. If that's the case, then good luck, but boy-oh-boy! are they playing high-stakes poker here.

Old Chinese curse: be careful what you wish for.

December 23, 2013

We are all Satoshi Nakamoto

Rumours continue to circulate as to the person who wrote the Bitcoin paper. Occasionally they are directed at self, but they can equally be directed at all the superlative financial cryptographers listed on this site and many others.

Rumours continue to circulate as to the person who wrote the Bitcoin paper. Occasionally they are directed at self, but they can equally be directed at all the superlative financial cryptographers listed on this site and many others.

Let's take a moment to ask what we are doing here.

*Satoshi Nakamoto chose to do his work in anonymity*.

Or more technically in psuedoanonymity, as someone who claims to be 'anon' leaves no individual name and cannot be connected forward or backwards in time.

He, assuming it is a he, probably had good reasons for this. Which leaves us pondering why we would disrespect those reasons?

We, all of us, in today's Bitcoin world and yesterday's precursors to that world: cypherpunks, crypto, FC, privacy, mobile money, Tor, the Internet in general, ... we all hold privacy as an article of faith. We built the thing to protect people, and protect their privacy.

If Satoshi Nakamoto chose privacy, by what right or motive do we breach that? None.

There is no doctrine that permits anyone out there to arbitrarily strip out someone's privacy and remain one of us. Quite the opposite: our beliefs and existence call for protecting this person.

Indeed, revealing a stated secret of someone is more or less a crime in many contexts. Intelligence agencies can of course spy on whosoever, but they are not allowed to reveal that information (or, so says the doctrine). Police can demand information, but only pursuant to a crime -- probable cause and all that.

Freedom of the press? Public figures are by their nature afforded less 'privacy' because they are public; but they chose that path, and the courts grant the ability of the paparazzi only certain abilities, in line with the public figures' choice of public life. Paparazzi aren't allowed to chase ordinary folk, and Satoshi Nakamoto clearly chose to be out of that game.

Freedom of the press doesn't cut it. Nor does whistleblower status. Nor does 'public interest' as the public has no conceivable benefit to knowing more.

Those people who are digging around trying to strip this person's privacy are doing so because they haven't been called on it. Because they think it is cool. Because they feel intellectually challenged by the use of nyms, and using a nym is a licence to curiosity.

Wrong.

I call you now: what you are doing is wrong.

In some places it is a crime, because breaching privacy presumes there is another crime to follow: theft or fraud or extortion. It's a good presumption. In all civilised places, breaching privacy is an anathema. We all in this business -- save those sad damned souls with 5 eyes -- are working to *protect our community* not single out vulnerable persons and burn them at the stake.

Get you gone, get you out of our community. Anyone who publically reveals anyone else's private information has no common part with us. Anyone who goes on a witchhunt is our enemy. We are not doing all this work to give a few paparazzi a special scoop. To the person who eventually outs Satoshi Nakamoto I say this: the only place you'll be welcome is the NSA. Get ye there, scum.

In the words of that old film, we are all Satoshi Nakamoto.

December 08, 2011

Two-channel breached: a milestone in threat evaluation, and a floor on monetary value

Readers will know we first published the account on "Man in the Browser by Philipp Güring way back when, and followed it up with news that the way forward was dual channel transaction signing. In short, this meant the bank sending an SMS to your handy mobile cell phone with the transaction details, and a check code to enter if you wanted the transaction to go through.

On the face of it, pretty secure. But at the back of our minds, we knew that this was just an increase in difficulty: a crook could seek to control both channels. And so it comes to pass:

In the days leading up to the fraud being committed, [Craig] had received two strange phone calls. One came through to his office two-to-three days earlier, claiming to be a representative of the Australian Tax Office, asking if he worked at the company. Another went through to his home number when he was at work. The caller claimed to be a client seeking his mobile phone number for an urgent job; his daughter gave out the number without hesitation.The fraudsters used this information to make a call to Craigs mobile phone provider, Vodafone Australia, asking for his phone number to be ported to a new device.

As the port request was processed, the criminals sent an SMS to Craig purporting to be from Vodafone. The message said that Vodafone was experiencing network difficulties and that he would likely experience problems with reception for the next 24 hours. This bought the criminals time to commit the fraud.

The unintended consequence of the phone being used for transaction signing is that the phone is now worth maybe as much as the fraud you can pull off. Assuming the crooks have already cracked the password for the bank account (something probably picked up on a market for pennies), the crooks are now ready to spend substantial amounts of time to crack the phone. In this case:

Within 30 minutes of the port being completed, and with a verification code in hand, the attackers were spending the $45,000 at an electronics retailer.Thankfully, the abnormally large transaction raised a red flag within the fraud unit of the Commonwealth Bank before any more damage could be done. The team tried unsuccessfully to call Craig on his mobile. After several attempts to contact him, Craigs bank account was frozen. The fraud unit eventually reached him on a landline.

So what happens now that the crooks walked with $45k of juicy electronics (probably convertible to cash at 50-70% off face over ebay) ?

As is standard practice for online banking fraud in Australia, the Commonwealth Bank has absorbed the hit for its customer and put $45,000 back into Craig's account.A NSW Police detective contacted Craig on September 15 to ensure the bank had followed through with its promise to reinstate the $45,000. With this condition satisfied, the case was suspended on September 29 pending the bank asking the police to proceed with the matter any further.

One local police investigator told SC that in his long career, a bank has only asked for a suspended online fraud case to be investigated once. The vast majority of cases remain suspended. Further, SC Magazine was told that the police would, in any case, have to weigh up whether it has the adequate resources to investigate frauds involving such small amounts of money.

No attempt was made at a local police level to escalate the Craig matter to the NSW Police Fraud and Cybercrime squad, for the same reasons.

In a paper I wrote in 2008, I stated for some value below X, police wouldn't lift a finger. The Prosecutor has too much important work to do! What we have here is a very definate floor beyond which Internet systems which transmit and protect value are unable to rely on external resources such as the law . Reading more:

But the Commonwealth Bank claims it has forwarded evidence to the NSW and Federal Police forces that could have been used to prosecute the offenders.The banks fraud squad which had identified the suspect transactions within minutes of the fraud being committed - was able to track down where the criminals spent the stolen money.

A spokesman for the bank said it dealt with both Federal and State (NSW) Police regarding the incident and that both authorities were advised on the availability of CCTV footage of the offenders spending their ill-gotten gains.

The Bank was advised by one of the authorities that the offender had left the country reducing the likelihood of further action by that authority, the spokesperson said.

This number goes up dramatically once we cross a border. In that paper I suggested 25k, here we have a reported number of $45k.

Why is that important? Because, some systems have implicit guarantees that go like "we do blah and blah and blah, and then you go to the police and all your problems are solved!" Sorry, not if it is too small, where small is surprisingly large. Any such system that handwaves you to the police without clearly indicating the floor of interest ... is probably worthless.

So when would you trust a system that backstopped to the police? I'll stick my neck out and say, if it is beyond your borders, and you're risking >> $100k, then you might get some help. Otherwise, don't bet your money on it.

October 26, 2011

Phishing doesn't really happen? It's too small to measure?

Two Microsoft researchers have published a paper pouring scorn on claims cyber crime causes massive losses in America. They say its just too rare for anyone to be able to calculate such a figure.Dinei Florencio and Cormac Herley argue that samples used in the alarming research we get to hear about tend to contain a few victims who say they lost a lot of money. The researchers then extrapolate that to the rest of the population, which gives a big total loss estimate in one case of a trillion dollars per year.

But if these victims are unrepresentative of the population, or exaggerate their losses, they can really skew the results. Florencio and Herley point out that one person or company claiming a $50,000 loss in a sample of 1,000 would, when extrapolated, produce a $10 billion loss for America as a whole. So if that loss is not representative of the pattern across the whole country, your total could be $10 billion too high.

Having read the paper, the above is about right. And sufficient description, as the paper goes on for pages and pages making the same point.

Now, I've also been skeptical of the phishing surveys. So, for a long time, I've just stuck to the number of "about a billion a year." And waited for someone to challenge me on it :) Most of the surveys seemed to head in that direction, and what we would hope for would be more useful numbers.

So far, Florencio and Herley aren't providing those numbers. The closest I've seen is the FBI-sponsored report that derives from reported fraud rather than surveys. Which seems to plumb in the direction of 10 billion a year for all identity-related consumer frauds, and a sort handwavy claim that there is a ration of 10:1 between all fraud and Internet related fraud.

I wouldn't be surprised if the number was really 100 million. But that's still a big number. It's still bigger than income of Mozilla, which is the 2nd browser by numbers. It's still bigger than the budget of the Anti-phishing Working Group, an industry-sponsored private thinktank. And CABForum, another industry-only group.

So who benefits from inflated figures? The media, because of the scare stories, and the public and private security organisations and businesses who provide cyber security. The above parliamentary report indicated that in 2009 Australian businesses spent between $1.37 and $1.95 billion in computer security measures. So on the reports figures, cyber crime produces far more income for those fighting it than those committing it.

Good question from the SMH. The answer is that it isn't in any player's interest to provide better figures. If so (and we can see support from the Silver Bullets structure) what is Florencio and Herley's intent in popping the balloon? They may be academically correct in trying to deflate the security market's obsession with measurable numbers, but without some harder numbers of their own, one wonders what's the point?

What is the real number? Florencio and Herley leave us dangling at that point. Are they are setting up to provide those figures one day? Without that forthcoming, I fear the paper is destined to be just more media fodder as shown in its salacious title. Iow, pointless.

Hopefully numbers are coming. In an industry steeped in Numerology and Silver Bullets, facts and hard numbers are important. Until then, your rough number is as good as mine -- a billion.

June 09, 2011

1st round in Internet Account Fraud World Cup: Customer 0, Bank 1, Attacker 300,000

More grist for the mill -- where are we on the security debate? Here's a data point.

In May 2009, PATCO, a construction company based in Maine, had its account taken over by cyberthieves, after malware hijacked online banking log-in and password credentials for the commercial account PATCO held with Ocean Bank. ....

There are two ways to look at this: the contractual view, and the responsible party view. The first view holds that contracts describe the arrangement, and parties govern themselves. The second holds that the more responsible party is required to be <ahem> more responsible. PATCO decided to ask for the second:

A magistrate has recommended that a U.S. District Court in Maine deny a motion for a jury trial in an ACH fraud case filed by a commercial customer against its former bank. According to the order, which must still be reviewed by the presiding judge, the bank fulfilled its contractual obligations for security and authentication through its requirement for log-in and password credentials. ....At issue for PATCO is whether banks should be held responsible when commercial accounts, like PATCO's, are drained because of fraudulent ACH and wire transfers approved by the bank. How much security should banks and credit unions reasonably be required to apply to the commercial accounts they manage?

"Obviously, the major issue is the banks are saying this is the depositors' problem," Patterson says, "but the folks that are losing money through ACH fraud don't have enough sophistication to stop this."

And lost.

David Navetta, an attorney who specializes in IT security and privacy, says the magistrate's recommendation, if accepted by the judge, could set an interesting legal precedent about the security banks are expected to provide. And unless PATCO disputes the order, Navetta says it's unlikely the judge will overrule the magistrate's findings. PATCO has between 14 and 21 days to respond."Many security law commentators, myself included, have long held that *reasonable security does not mean bullet-proof security*, and that companies need not be at the cutting edge of security to avoid liability," Navetta says. "The court explicitly recognizes this concept, and I think that is a good thing: For once, the law and the security world agree on a key concept."

My emphasis added, and it is an important point that security doesn't mean absolute security, it means reasonable security. Which from the principle of the word, means stopping when the costs outweigh the benefits.

But that is not the point that is really addressed. The question is whether (a) how we determine what is acceptable (not reasonable), and (b) if the Customer loses out when acceptable wasn't reasonable, is there any come-back?

In the disposition, the court notes that Ocean Bank's security could have been better. "It is apparent, in the light of hindsight, that the Bank's security procedures in May 2009 were not optimal," the order states. "The Bank would have more effectively harnessed the power of its risk- profiling system if it had conducted manual reviews in response to red flag information instead of merely causing the system to trigger challenge questions."But since *PATCO agreed to the bank's security methods when it signed the contract*, the court suggests then that PATCO considered the bank's methods to be reasonable, Navetta says. The law also does not require banks to implement the "best" security measures when it comes to protecting commercial accounts, he adds.

So, we can conclude that "reasonable" to the bank meant putting in place risk-profiling systems. Which it then bungled (allegedly). However, the standard of security was as agreed in the contract, *reasonable or not*.

That is, *reasonable security* doesn't enter into it. More on that, as the observers try and mold this into a "best practices" view:

"Patco in effect demands that Ocean Bank have adopted the best security procedures then available," the order states. "As the Bank observes, that is not the law."

(Where it says "best" read "best practices" which is lowest common denominator, a rather different thing to best. In particular, the case is talking about SecureId tokens and the like.)

Patterson argues that Ocean Bank was not complying with the Federal Financial Institutions Examination Council's requirement for multifactor authentication when it relied solely on log-in and password credentials to verify transactions. Navetta agrees, but the court in this order does not."The court took a fairly literal approach to its analysis and bought the bank's argument that the scheme being used was multifactor, as described in the [FFIEC] guidance," Navetta says. "The analysis on what constitutes multifactor and whether some multifactor schemes [out of band; physical token] are better than others was discussed, and, to some degree, the court acknowledged that the bank's security could have been better. Even so, it was technically multifactor, as described in the FFEIC guidance, in the court's opinion, and "the best" was not necessary."

Navetta says the court's view of multifactor does not jibe with common industry understanding. Most industry experts, he says, would not consider Ocean Bank's authentication practices in 2009 to be true multifactor. "Obviously, the 'something you have' factor did not fully work if hackers were able to remotely log into the bank using their own computer," he says. "I think that PATCO's argument was the additional factors were meaningless since the challenge question was always asked anyway, and apparently answering it correctly worked even if one of the factors failed. In other words, it appears that PATCO was arguing that the net result of the other two factors failing was going back to a single factor."

This problem has been known for a long time. When the "best practices" approach is used, as in this FFIEC example, there is a list of things you do. You do them, and you're done. You are encouraged to (a) not do any better, and (b) cheat. The trick employed above, to interpret the term "multi-factor" in a literal fashion, rather than using the security industry's customary (and more expensive) definition, has been known for a long long time.

It's all part of the "best practices" approach, and the court may have been wise to avoid further endorsing it. There is now more competition in security practices, says this court, and you'll find it in your contract.

Caveat: as with all such cases, this is a preliminary ruling, and it can be overturned including several times... before we see a precedent.

November 06, 2010

I am Spartacus! and other dramatic "Identity" scripts

Name collisions are such fun! The apocryphal story of the Spartacus, the slave-turned-revolutionary, has him being saved by all his slave-warriors standing up saying, "I am Spartacus!" The poor Roman Leaders had little grip on the situation, as they couldn't recall any biometrics for the guy.

A short time ago, I wondered into an office to get some service, and a nice lady grabbed me at the door, entered my name in and eventually queued me to a service desk. The poor woman at the computer couldn't work it out though, as having entered my first name into the computer, all the details were wrong. It was finally resolved when an attendant 2 desks away asked why she'd opened up his client's file ... who had the same first name. Apparently, my name is subject to TLCs, three-letter-collisions...

Luckily, that was easy to solve. My 4-letter name reduces to around 2 people in our field. Hopefully, this is all resolved in a seminal paper on the subject:

Global Names Considered Harmful by Mark Miller, Mark Miller, and Mark Miller

As reported by Bill Frantz, that's the paper.

Meanwhile, if you want to purchase one of these fabulous global names, some prices spotted a while back: As reported by Dave Birch somewhere, and I only copied this one line (before the link went south):

Authorities said Lominy charged $1,600 to $2,000 for a state driver's license...

And (this time with the link still working):

The gangs are setting up fake-ID factories using printers bought at high street shops. The Met has shut at least 20 factories in the last 18 months and believes more than 30,000 fake identities are in circulation.Police examined 12,000 of them and established they were behind a racket worth £14 million. One £750 printer was withdrawn from sale at PC World after detectives revealed it could produce replicas of the proposed new ID card and EU driving licences.

...

Mr Mawer added: There are people with dual identities, one real and one for committing crime. He revealed that specialist printers capable of making convincing ID documents such as EU driving licences could be bought for £750, though others cost £5,000.

I'm currently trying to get my drivers licence back, and so far it has cost $465, with more costs to come. At some point, the above deal looks good, and they throw in a free printer! A steal :)

Speaking of "Identity" I also watched a British drama of that name tonight. In true form, it's "gritty police drama," which is to say, a copy of a dozen other shows which make a habit of implausible scripts woven around too many cross-overs.

March 24, 2010

Why the browsers must change their old SSL security (?) model

In a paper Certified Lies: Detecting and Defeating Government Interception Attacks Against SSL_, by Christopher Soghoian and Sid Stammby, there is a reasonably good layout of the problem that browsers face in delivering their "one-model-suits-all" security model. It is more or less what we've understood all these years, in that by accepting an entire root list of 100s of CAs, there is no barrier to any one of them going a little rogue.

Of course, it is easy to raise the hypothetical of the rogue CA, and even to show compelling evidence of business models (they cover much the same claims with a CA that also works in the lawful intercept business that was covered here in FC many years ago). Beyond theoretical or probable evidence, it seems the authors have stumbled on some evidence that it is happening:

The companys CEO, Victor Oppelman confirmed, in a conversation with the author at the companys booth, the claims made in their marketing materials: That government customers have compelled CAs into issuing certificates for use in surveillance operations. While Mr Oppelman would not reveal which governments have purchased the 5-series device, he did confirm that it has been sold both domestically and to foreign customers.

(my emphasis.) This has been a lurking problem underlying all CAs since the beginning. The flip side of the trusted-third-party concept ("TTP") is the centralised-vulnerability-party or "CVP". That is, you may have been told you "trust" your TTP, but in reality, you are totally vulnerable to it. E.g., from the famous Blackberry "official spyware" case:

Nevertheless, hundreds of millions of people around the world, most of whom have never heard of Etisalat, unknowingly depend upon a company that has intentionally delivered spyware to its own paying customers, to protect their own communications security.

Which becomes worse when the browsers insist, not without good reason, that the root list is hidden from the consumer. The problem that occurs here is that the compelled CA problem multiplies to the square of the number of roots: if a CA in (say) Ecuador is compelled to deliver a rogue cert, then that can be used against a CA in Korea, and indeed all the other CAs. A brief examination of the ways in which CAs work, and browsers interact with CAs, leads one to the unfortunate conclusion that nobody in the CAs, and nobody in the browsers, can do a darn thing about it.

So it then falls to a question of statistics: at what point do we believe that there are so many CAs in there, that the chance of getting away with a little interception is too enticing? Square law says that the chances are say 100 CAs squared, or 10,000 times the chance of any one intercept. As we've reached that number, this indicates that the temptation to resist intercept is good for all except 0.01% of circumstances. OK, pretty scratchy maths, but it does indicate that the temptation is a small but not infinitesimal number. A risk exists, in words, and in numbers.

One CA can hide amongst the crowd, but there is a little bit of a fix to open up that crowd. This fix is to simply show the user the CA brand, to put faces on the crowd. Think of the above, and while it doesn't solve the underlying weakness of the CVP, it does mean that the mathematics of squared vulnerability collapses. Once a user sees their CA has changed, or has a chance of seeing it, hiding amongst the crowd of CAs is no longer as easy.

Why then do browsers resist this fix? There is one good reason, which is that consumers really don't care and don't want to care. In more particular terms, they do not want to be bothered by security models, and the security displays in the past have never worked out. Gerv puts it this way in comments:

Security UI comes at a cost - a cost in complexity of UI and of message, and in potential user confusion. We should only present users with UI which enables them to make meaningful decisions based on information they have.

They love Skype, which gives them everything they need without asking them anything. Which therefore should be reasonable enough motive to follow those lessons, but the context is different. Skype is in the chat & voice market, and the security model it has chosen is well-excessive to needs there. Browsing on the other hand is in the credit-card shopping and Internet online banking market, and the security model imposed by the mid 1990s evolution of uncontrollable forces has now broken before the onslaught of phishing & friends.

In other words, for browsing, the writing is on the wall. Why then don't they move? In a perceptive footnote, the authors also ponder this conundrum:

3. The browser vendors wield considerable theoretical power over each CA. Any CA no longer trusted by the major browsers will have an impossible time attracting or retaining clients, as visitors to those clients websites will be greeted by a scary browser warning each time they attempt to establish a secure connection. Nevertheless, the browser vendors appear loathe to actually drop CAs that engage in inappropriate be- havior a rather lengthy list of bad CA practices that have not resulted in the CAs being dropped by one browser vendor can be seen in [6].

I have observed this for a long time now, predicting phishing until it became the flood of fraud. The answer is, to my mind, a complicated one which I can only paraphrase.

For Mozilla, the reason is simple lack of security capability at the *architectural* and *governance* levels. Indeed, it should be noticed that this lack of capability is their policy, as they deliberately and explicitly outsource big security questions to others (known as the "standards groups" such as IETF's RFC committees). As they have little of the capability, they aren't in a good position to use the power, no matter whether they would want to or not. So, it only needs a mildly argumentative approach on the behalf of the others, and Mozilla is restrained from its apparent power.

What then of Microsoft? Well, they certainly have the capability, but they have other fish to fry. They aren't fussed about the power because it doesn't bring them anything of use to them. As a corporation, they are strictly interested in shareholders' profits (by law and by custom), and as nobody can show them a bottom line improvement from CA & cert business, no interest is generated. And without that interest, it is practically impossible to get the various many groups within Microsoft to move.

Unlike Mozilla, my view of Microsoft is much more "external", based on many observations that have never been confirmed internally. However it seems to fit; all of their security work has been directed to market interests. Hence for example their work in identity & authentication (.net, infocard, etc) was all directed at creating the platform for capturing the future market.

What is odd is that all CAs agree that they want their logo on their browser real estate. Big and small. So one would think that there was a unified approach to this, and it would eventually win the day; the browser wins for advancing security, the CAs win because their brand investments now make sense. The consumer wins for both reasons. Indeed, early recommendations from the CABForum, a closed group of CAs and browsers, had these fixes in there.

But these ideas keep running up against resistance, and none of the resistance makes any sense. And that is probably the best way to think of it: the browsers don't have a logical model for where to go for security, so anything leaps the bar when the level is set to zero.

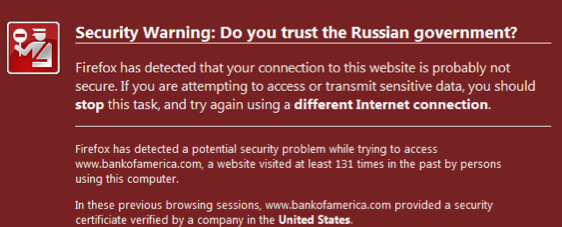

Which all leads to a new group of people trying to solve the problem. The authors present their model as this:

The Firefox browser already retains history data for all visited websites. We have simply modified the browser to cause it to retain slightly more information. Thus, for each new SSL protected website that the user visits, a Certlock enabled browser also caches the following additional certificate information:

A hash of the certificate.

The country of the issuing CA.

The name of the CA.

The country of the website.

The name of the website.

The entire chain of trust up to the root CA.When a user re-visits a SSL protected website, Certlock first calculates the hash of the sites certificate and compares it to the stored hash from previous visits. If it hasnt changed, the page is loaded without warning. If the certificate has changed, the CAs that issued the old and new certificates are compared. If the CAs are the same, or from the same country, the page is loaded without any warning. If, on the other hand, the CAs countries differ, then the user will see a warning (See Figure 3).

This isn't new. The authors credit recent work, but no further back than a year or two. Which I find sad because the important work done by TrustBar and Petnames is pretty much forgotten.

But it is encouraging that the security models are battling it out, because it gets people thinking, and challenging their assumptions. Only actual produced code, and garnered market share is likely to change the security benefits of the users. So while we can criticise the country approach (it assumes a sort of magical touch of law within the countries concerned that is already assumed not to exist, by dint of us being here in the first place), the country "proxy" is much better than nothing, and it gets us closer to the real information: the CA.

From a market for security pov, it is an interesting period. The first attempts around 2004-2006 in this area failed. This time, the resurgence seems to have a little more steam, and possibly now is a better time. In 2004-2006 the threat was seen as more or less theoretical by the hoi polloi. Now however we've got governments interested, consumers sick of it, and the entire military-industrial complex obsessed with it (both in participating and fighting). So perhaps the newcomers can ride this wave of FUD in, where previous attempts drowned far from the shore.

May 25, 2009

The Inverted Pyramid of Identity

Let's talk about why we want Identity. There appear to be two popular reasons why Identity is useful. One is as a handle for the customer experience, so that our dear user can return day after day and maintain her context.

The other is as a vector of punishment. If something goes wrong, we can punish our user, no longer dear.

It's a sad indictment of security, but it does seem as if the state of the security nation is that we cannot design, build and roll-out secure and safe systems. Abuse is likely, even certain, sometimes relished: it is almost a business requirement for a system of value to prove itself by having the value stolen. Following the inevitable security disaster, the business strategy switches smoothly to seeking who to blame, dumping the liability and covering up the dirt.

Users have a very different perspective. Users are well aware of the upsides and downsides, they know well: Identity is for good and for bad.

Indeed, one of the persistent fears of users is that an identity system will be used to hurt them. Steal their soul, breach their privacy, hold them to unreasonable terms, ultimately hunt them down and hurt them, these are some of the thoughts that invasive systems bring to the mind of our dear user.

This is the bad side of identity: the individual and the system are "in dispute," it's man against the machine, Jane against Justice. Unlike the usage case of "identity-as-a-handle," which seems to be relatively well developed in theory and documentation, the "identity-as-punishment" metaphor seems woefully inadequate. It is little talked about, it is the domain of lawyers and investigators, police and journalists. It's not the domain of technologists. Outside the odd and forgettable area of law, disputes are a non-subject, and not covered at all where I believe it is required the most: marketing, design, systems building, customer relations, costs analysis.

Indeed, disputes are taboo for any business.

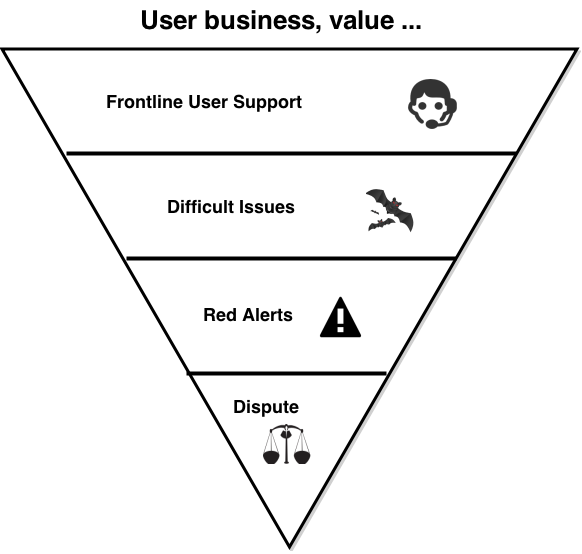

Yet, this is unsustainable. I like to think of good Internet (or similar) systems as an inverted pyramid. On the top, the mesa, is the place where users build their value. It needs to be flat and stable. Efficient, and able to expand horizontally without costs. Hopefully it won't shift around a lot.

Dig slightly down, and we find the dirty business of user support. Here, the business faces the death of a 1000 tiny support cuts. Each trivial, cheap and ignorable, except in the aggregate. Below them deeper down are the 100 interesting support issues. Deeper still, the 10 or so really serious red alerts. Of which one becomes a real dispute.

Dig slightly down, and we find the dirty business of user support. Here, the business faces the death of a 1000 tiny support cuts. Each trivial, cheap and ignorable, except in the aggregate. Below them deeper down are the 100 interesting support issues. Deeper still, the 10 or so really serious red alerts. Of which one becomes a real dispute.

The robustness of the pyramid is based on the relationship between the dispute at the bottom, the support activity in the middle, and the top, as it expands horizontally for business and for profit.

Your growth potential is teetering on this one thing: the dispute at the apex of the pyramid. And, if you are interested in privacy, this is the front line, for a perverse reason: this is where it is most lost. Support and full-blown disputes are the front line of privacy and security. Events in this area are destroyers of trust, they are the bane of marketing, the nightmare of PR.

Which brings up some interesting questions. If support is such a destroyer of trust, why is it an afterthought in so many systems? If the dispute is such a business disaster, why is resolution not covered at all? Or hidden, taboo? Or, why do businesses think that their dispute resolution process starts with their customers' identity handles? And ends with the lawyers?

Here's a thought: If badly-handled support and dispute events are leaks of privacy, destroyers of trust, maybe well-handled events are builders of trust? Preservers of privacy?

If that is plausible, if it is possible that good support and good dispute handling build good trust ... maybe a business objective is to shift the process: support designed up front, disputes surfaced, all of it open? A mature and trusted provider might say: we love our disputes, we promote them. Come one, come all. That's how we show we care!

An imature and unstrusted provider will say: we have no disputes, we don't need them. We ask you the user to believe in our promise.

The principle that the business hums along on top of an inverted pyramid, that rests ultimately on a small powerful but brittle apex, is likely to cause some scratching of technophiliac heads. So let me close the circle, and bring it back to the Identity topic.

If you do this, if you design the dispute mechanism as a fully cross-discipline business process for the benefit of all, not only will trust go positive and privacy become aligned, you will get an extra bonus. A carefully constructed dispute resolution method frees up the identity system, as the latter no longer has to do double duty as the user handle *and* the facade of punishment. Your identity system can simply concentrate on the user's experience. The dark clouds of fear disappear, and the technology has a chance to work how the techies said it would.

We can pretty much de-link the entire identity-as-handles from the identity-as-punishment concept. Doing that removes the fear from the user's mind, because she can now analyse the dispute mechanism on its merits. It also means that the Identity system can be written only for its technical and usability merits, something that we always wanted to do but never could, quite.

(This is the rough transcript of a talk I gave at Identity & Privacy conference in London a couple of weeks ago. The concept was first introduced at LexCybernetoria, it was initially tried by WebMoney, partly explored in digital gold currencies, and finally was built in

CAcert's Arbitration project.)

March 15, 2009

... and then granny loses her house!

A canonical question in cryptography was about how much money you could put over a digital signature, and a proposed attack would often end, "and then Granny loses her house!" It might be seen as a sort of reminder that the crypto only went so far, and needed to be backed by institutional support for a lot of things.

And now comes Darren with news that Granny is losing her house, proverbially at least. In a somewhat imprecise article (written by a lawyer?) in the Times:

... The ingenuity of the heists carried out ranges from selling property they do not own to buying property at inflated valuations and making off with the difference.Critical to many of these scams is the use of stolen identities. According to many solicitors specialising in the field, the key context for the problem was the dash into deregulation and e-commerce earlier this decade.

There was a view throughout the profession that the abolition of documents of title and reliance upon electronic records would contribute to fraud. And so it has proved, Samson says. All this information is open to view through the internet so a fraudster can see exactly who owns a property, assume his or her identity and then sell it.

While this may sound absurd for owner-occupied homes, it is all too easy, for example, with vacant properties. Whats more the rightful owner wont even know that it has happened, he adds.

So the basic fraud appears to be: find a property that is not cared for by its owner. Assume the owner's identity. Sell it. Or,

To put the hat on what seems a complete botch-up by lawmakers and regulators, the effect of the Land Registration Act 2002 was that the fraudulent purchasers are given a legal title to their purchase. If the fraudster succeeds in having title registered in his name he can mortgage the property, Samson says. The true owner may be able to have the transfer to the fraudster reversed by rectification but he will still take the property subject to the mortgage.

buy it! Now, within that article, there is no shortage of soliciters saying "we told you so!" But the real systemic causes of this fraud will need more digging. We can guess what the first cause is: identify theft. That is, high levels of dependency on the fictitious notion of identity as a protector of security. Yes, that will always get you, and it will likely take another decade before the British populace lose their current faith in identity.

The second cause however is more subtle. As pointed out by Eliana Morandi in a 2007 article, "The role of the notary in real estate conveyancing," problems like that do not happen in continental Europe (see _Digital Evidence and Electronic Signature Law Review," 2007). What's the difference? Whereas the English common law system requires each party to have independent representation, the continental system requires one party, the notary to secure the entire deed for both the buyer and seller. And take the full responsibility, so issues such as this are solved easily:

In cases where, for example, a lender whose mortgage is being paid off has no lawyer, the conveyancer may face claims for having not fully observed the Land Registrys practice guide. And instead of the Land Registry paying compensation, it will look to the solicitors to reimburse the victims.Warren Gordon, of Olswang, who sits on the Law Societys conveyancing and land law committee, protests that it is unrealistic to expect solicitors to do a comprehensive check on someone who is not their client. Its unfair to put all the risk on the solicitor, including asking him or her to sign off on the identity of someone he or she does not act for, he says.

Meanwhile, Paul Marsh, president of the Law Society, points the finger instead at the bankers who are providing fraudsters with the funds to perpetrate their dodgy deals. At the top end we see vast bonuses being paid to bankers at board level for what turn out to be disastrous investments, while at the grass roots local bankers are under pressure to make loans to sell money without even the most basic procedures in place to prevent fraud, he says. The banks are refusing to take responsibility for this because they know that they can pin it on the solicitors.

The bottom line of course is which system is more efficient in the long run. The European Notary may charge more money for the perfect transaction. If the English solicitors can undercut that price, and reduce the fraud such that the result is still better, it is a good deal. Which is it? The abstract to Morandi's article gives a clue:

The role of the notary in real estate conveyancingEliana Morandi sets out the role of the civil law notary in the context of real estate conveyancing, illustrating how more effective and less costly it is when undertaken by civil law notaries.

(Unfortunately my copy has conveyed itself into hiding.) If fraud rises in Britain, we will need changes. Now, we've seen with the rise of identity fraud in the USA that there has been zero incentive for the players to change the way identity is used, so we can predict that the Brits will not change the registry practice. Also, the likelihood of the soliciters giving up their lucrative representational practice is pretty low.

However the complicated notarial versus solicitorial versus identity versus registry war pans out in the long run, it seems that solicitors are going to have to bear increased responsibility to check the identity of their counterparty. Perhaps they should pop into the Identity and Privacy forum, 14th 15th May over in London's Charing Cross Hotel? Probably a bargain if it saves them from granny's wrath.

February 20, 2009

The mystery of Ireland's worst driver

Chris Walsh spotted this:

The mystery of Ireland's worst driver

Details of how police in the Irish Republic finally caught up with the country's most reckless driver have emerged, the Irish Times reports.

He had been wanted from counties Cork to Cavan after racking up scores of speeding tickets and parking fines.

However, each time the serial offender was stopped he managed to evade justice by giving a different address.

But then his cover was blown.

It was discovered that the man every member of the Irish police's rank and file had been looking for - a Mr Prawo Jazdy - wasn't exactly the sort of prized villain whose apprehension leads to an officer winning an award.

In fact he wasn't even human.

"Prawo Jazdy is actually the Polish for driving licence and not the first and surname on the licence," read a letter from June 2007 from an officer working within the Garda's traffic division.

Map showing Poland

"Having noticed this, I decided to check and see how many times officers have made this mistake.

"It is quite embarrassing to see that the system has created Prawo Jazdy as a person with over 50 identities."